| Frequency | Data Speed | Distance |

| 5 GHz | High speed | Low Distance |

| 2.4 GHz | Low speed | High Distance |

2.4GHz vs 5GHz WiFi

Frequency use in most countries is regulated — this is to prevent someone from setting up a transmitter that blocks radio signals and telephones from working. It’s why Christian Slater’s movie Pump Up the Volume shows the dangers of rogue pirate radio stations. Just gets those teenagers with their rock and roll in trouble.

So when engineers looked over the available spectrum, two candidates came to the front — 5 GHz and 2.4 GHz. As explained earlier, light does weird things, but one thing to know is the higher the frequency, the more waves in the same space, which means the higher the data.

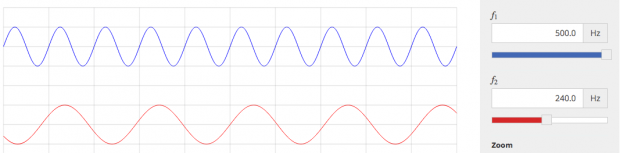

Here’s an idea of how those waves would look if you could see them. 5 GHz has shorter waves (more waves in the same area) than 2.4 GHz:

Different things happen because of frequencies. At 5 GHz, more data can be carried, because there are more ups and downs (which the computer represents as 1’s and 0’s). But the problem is it’s harder for higher frequency light to go as far. With those increased waves, it can be harder to move through solid objects like walls, and the energy dissipates faster with high-frequency signals versus lower frequency ones.

At 2.4 GHz, not as much information can be transmitted, but because it’s not as high energy, it can go further before the signal degrades.

Go back to when WiFi was first being invented, engineers had to decide what to do. Did they want a lot of data, or did they want a lot of range?

Turns out — the answer was “yes.” Two standards were created — a and b. A would use the 5 GHz frequency, and B would use 2.4 GHz. Problem solved! Everybody is Happy! Some routers were made to only support one of these two standards, but over time manufacturers developed routers that could support both networks.

This plays out later on when new WiFi standards were made to be backwards compatible with A or B (such as how 802.11g networks are backwards compatible with 802.11b which uses 2.4 GHz).